Table of Contents

Introduction

Offloading Layer 7 proxies to high-performance user-space network stacks is no longer optional for handling modern edge traffic at scale. When integrating Nginx L7 processing into AsterNOS-VPP (Enterprise SONiC), we made a seemingly counter-intuitive architectural decision: we rejected the highly intrusive VCL (VPP Communication Library) zero-copy approach in favor of the LDP (LD_PRELOAD) mechanism. This wasn’t a technical compromise, but a deliberate engineering choice to deliver hardware-accelerated performance without sacrificing the agility of an open software ecosystem.

Here is a deep dive into the architectural trade-offs and how AsterNOS-VPP uses LDP to bridge extreme throughput with standard DevOps workflows.

Ⅰ. VPP Nginx Integration Evolves from VCL to LDP

When integrating Nginx into a VPP (Vector Packet Processing) user-space data plane, architects typically face two paths.

Path A: VCL Architecture — The “Code Island” Built for Benchmarks

The VCL approach requires modifying Nginx’s core network logic at the source-code level, replacing standard POSIX socket calls with VCL APIs.

The Use Case: This deeply customized approach aims to squeeze out the absolute maximum performance via pure zero-copy. It is well-suited for heavily closed “appliance” environments like 5G Core (UPF) or High-Frequency Trading (HFT) nodes requiring microsecond latency.

The Fatal Flaw: For enterprise gateways, this architecture is an operational nightmare. It tightly couples Nginx with a specific version of the VPP library. This closed-loop design severs the application from the open-source ecosystem—users cannot freely load Nginx community modules. Worse, when 0-day vulnerabilities strike, users are trapped waiting for the equipment vendor to merge source code and release a new firmware version.

Path B: LDP Architecture — The AsterNOS Engineering Choice

What do enterprise edge gateways actually need? Speed, yes, but also rock-solid stability to handle diverse workloads (WAF, GeoIP, Portal auth).

Our Motivation: We deliberately accepted the microscopic overhead introduced by LDP to gain 100% compatibility with the vast Nginx community ecosystem. For real-world engineering, the business agility gained through architectural openness far outweighs the marginal gains seen in sterile benchmark tests.

VCL vs. LDP: Nginx Architecture Comparison Matrix

To clearly illustrate the architectural differences, we compared them across real-world R&D and DevOps dimensions:

| Evaluation Metric | VPP + VCL (Intrusive Modification) | AsterNOS-VPP (LDP Architecture) |

| Core Design Goal | Closed appliance; maximizing extreme benchmark metrics. | Open platform; maximizing ecosystem and agility. |

| Module Extensibility | Very Poor (Requires vendor to adapt source code for 3rd-party modules). | Excellent (100% plug-and-play for native binary modules). |

| CVE Patch Cycle | Slow (Relies on deep source code merges and recompilation). | Instant (Directly update base binaries without modifying source). |

| Non-Standard Traffic | Weak (Lacks efficient fallback for non-standard flows). | Strong (Transparently falls back to the Linux kernel for secure processing). |

Ⅱ. The LDP Architecture: Transparent Layer 7 Acceleration

By adopting the LDP approach, AsterNOS-VPP strikes the perfect balance between extreme performance and an open ecosystem, delivering three core engineering values:

- 100% Native Binary Compatibility: The biggest dividend of the LDP architecture is that the system runs a completely unmodified, native Nginx binary. You don’t have to endure custom toolchains or recompile from source to hook into VPP. For existing reverse proxy setups, you can seamlessly migrate your accumulated Nginx Server blocks (e.g., location and rewrite rules). By importing your configuration snippets via the unified AsterNOS CLI, you retain the authentic Nginx management experience while enjoying VPP’s brute-force software acceleration.

- Lightning-Fast CVE Response: Traditional VCL setups force you to wait months for vendors to patch, adapt, and recompile Nginx against VPP when high-risk vulnerabilities emerge. Because LDP is entirely decoupled from the native binary, updating the base Nginx container image requires zero custom code merging—shrinking the vulnerability window from months to zero.

- Smart Transparent Fallback: Enterprise intranets are messy, often filled with non-standard protocols or out-of-band management traffic. LDP handles this elegantly: if traffic doesn’t meet the criteria for VPP acceleration, LDP transparently drops it back to the native Linux kernel network stack. This avoids the painful secondary development required by VCL to handle non-standard flows.

Ⅲ. How LDP Mechanism Bypasses the Kernel Network Stack

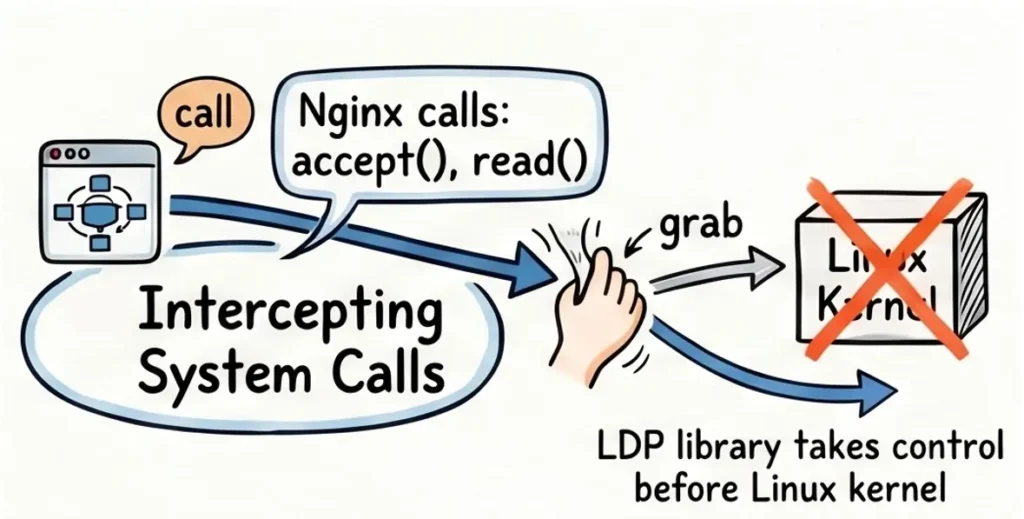

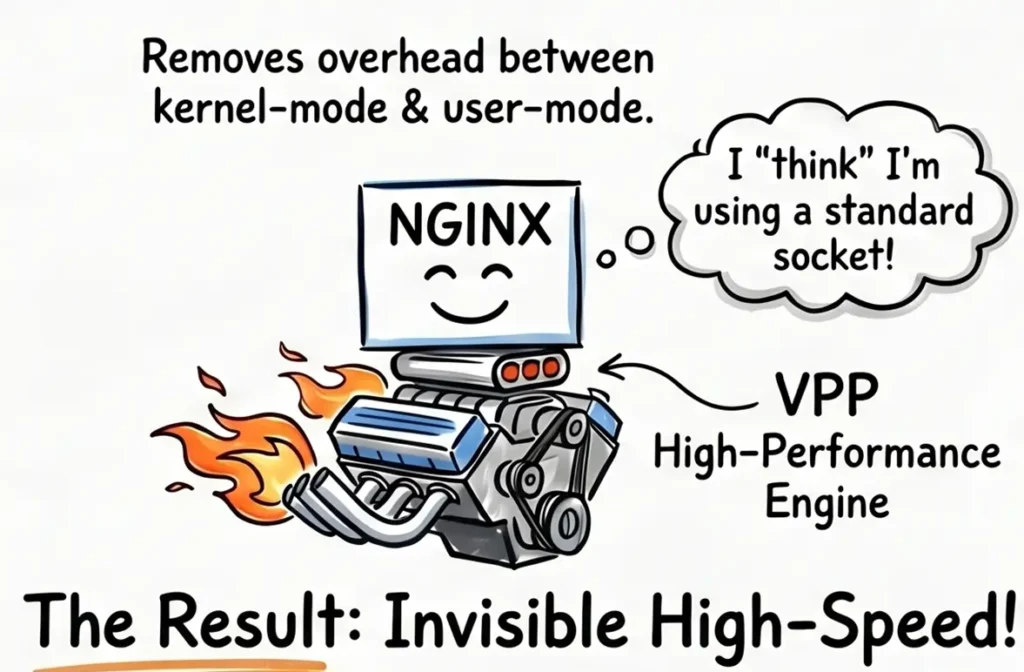

The essence of the LDP (LD_PRELOAD) mechanism lies in transparent hijacking. From the Nginx process’s perspective, it believes it is interacting with a standard Linux kernel, while the underlying data transport has actually been taken over by VPP.

1. Precision Syscall Interception:

AsterNOS leverages Linux’s LD_PRELOAD environment variable. When the Nginx worker process launches, it injects the libvcl_ldpreload.so dynamic library. When Nginx issues standard socket(), accept(), or read() syscalls, control is intercepted at the libc level by this library.

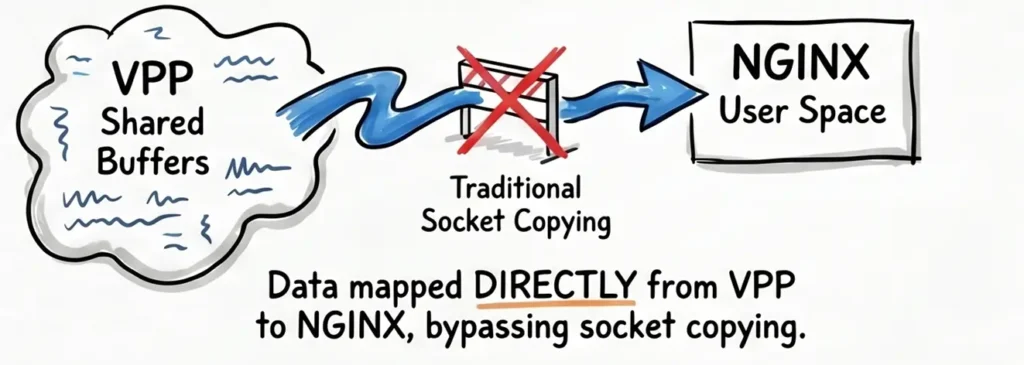

2. High-Speed Shared Memory Transport:

Once control is intercepted, the LDP library establishes lightning-fast communication with the shared memory region of the VPP main process. It queries the VPP Session Layer status and maps arriving data directly from the VPP shared buffer into Nginx’s user-space memory.

3. Bypassing the Kernel Bottleneck:

This process entirely bypasses the heavy Linux kernel network stack, eliminating costly context switches and data copying. The actual traffic is handled directly by VPP’s high-performance user-space protocol stack (including heavy lifting like TLS crypto-offloading), achieving pure software-defined acceleration.

Ⅳ. Benefits of 100% Native Nginx on VPP

In summary, 100% native Nginx on VPP delivers advantages in two main aspects:

Network Advantages: High Throughput, Low Latency

- Bypassing the Kernel: VPP moves packet processing to user-space, removing the “bottleneck” of the Linux kernel to reach physical hardware limits.

- Near-Zero Latency: Drastically reduces data copying and “packet inspections,” cutting processing time by several orders of magnitude.

- High-Concurrency Stability: Maintains rock-solid performance during DDoS attacks or massive traffic spikes without CPU exhaustion.

Customer Benefits: Cost Efficiency and Ecosystem Agility

- 10-to-1 Consolidation: One AsterNOS switch handles the Layer 7 workload of ten traditional servers, significantly cutting hardware and power costs.

- Maximum Hardware ROI: Fully utilizes CPU potential by eliminating “kernel overhead” waste.

- Total Ecosystem Freedom: 100% compatible with all community Nginx modules (WAF, GeoIP, etc.) with no vendor lock-in.

- Seamless Management: Zero-threshold deployment; simply import your existing Nginx configurations directly via the SONiC CLI.

Ⅴ. Conclusion

For too long, network architects introducing user-space protocol stacks faced a painful ultimatum: to get VPP’s incredible throughput, you had to endure the closed ecosystem and operational hell of deeply modified code.

But true enterprise-grade infrastructure shouldn’t force users to choose between peak performance and maintainability.

This is exactly why AsterNOS-VPP chose the LDP architecture. We refuse to build “code islands” isolated from the community. Through transparent syscall hijacking, we successfully engineered a seamless fusion of hardware-level acceleration and an uncompromisingly open software ecosystem.

Today, VPP finally has a 100% native Nginx.

Leave the low-level network processing and acceleration to us. We give you back the full open-source ecosystem, zero-day component control, and the standard DevOps experience you already know and trust.

Ⅵ. Appendix: A Simple SONiC CLI to Deploy Nginx and Isolate CPU

Engineers often ask: “Does enabling a user-space protocol stack require complex low-level memory mapping?”

On AsterNOS-VPP, the complexity is zero.

Because we deeply integrated the LDP mechanism under the hood, you don’t need to manually prepend cumbersome LD_PRELOAD variables at the OS level. Through AsterNOS’s unified CLI(built on SONiC), you can simply enable Nginx and isolate CPU cores. The system handles the transparent hijacking automatically.

# 1. Initialize Nginx globally and allocate dedicated CPU cores for guaranteed performance

sonic# configure terminal

sonic(config)# nginx start

sonic(config)# cpu_core nginx_num 2 vpp_num auto

# 2. Directly import and reuse your existing Nginx Server block configurations

sonic(config)# nginx update server /home/admin/proxy.conf

# 3. Apply changes with millisecond-level hot reloads

sonic(config)# nginx reload

Request a demo or need assistance ?

Fill out the form, and we’ll reach out to you today !