Table of Contents

Introduction

AsterNOS-VPP is a NOS developed from deep experience in open networking. For routing scenarios, it uses SONiC as the control plane and DPDK VPP for data plane forwarding—a combined architecture.

Implementing HQoS on SONiC and VPP is effectively implementing HQoS on AsterNOS-VPP. This article explores why we support HQoS on AsterNOS-VPP, the limitations of traditional QoS, and the constraints of HQoS under AsterNOS-VPP.

Why to Implement HQoS on SONiC and VPP ?

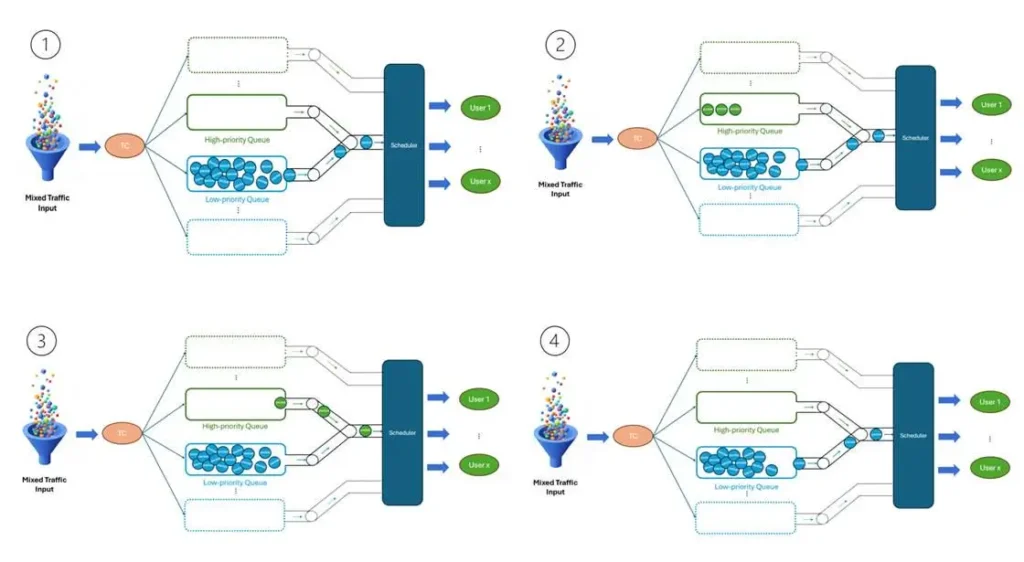

As enterprise network edges evolve towards multi-tenant and multi-service environments, the competition for bandwidth becomes the primary challenge. Traditional routing and “Flat QoS” strategies often struggle when dealing with complex traffic models where VIP users, guest Wi-Fi, and critical VoIP services share a single physical uplink.

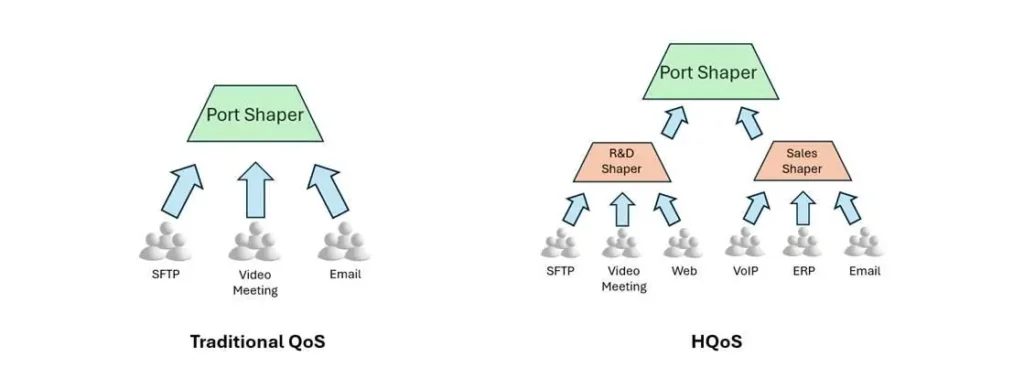

AsterNOS-VPP introduces HQoS (Hierarchical Quality of Service) capabilities. Unlike standard QoS which operates on a simple “Interface + Queue” model, HQoS introduces the concept of “Organization” into the network. It models traffic policies based on real-world structures—Tenants, Departments, and Users—providing a multi-layered governance mechanism.

What is the Main Problem with Traditional QoS ?

Why do we need to move beyond standard Interface-based QoS? Existing solutions face significant bottlenecks in modern multi-tenant environments. Implementing HQoS on SONiC and VPP solves many of these limitations in multi-tenant environments.

Limitation A:

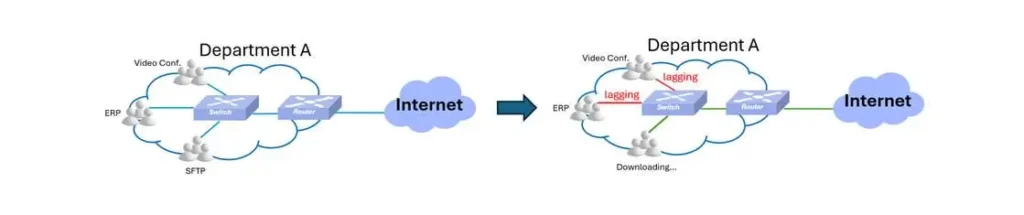

The “Noisy Neighbor” Effect: In traditional architectures, all traffic streams compete for the same physical buffer. * If a non-critical user (e.g., Guest Wi-Fi) initiates a massive download, they can exhaust the interface buffer instantly.

Result: Critical packets (e.g., R&D VoIP) arriving milliseconds later encounter a full buffer and are dropped (Tail Drop), regardless of their priority queue settings. The “queue” mechanism never gets a chance to work because the “door” is blocked.

Limitation B:

Lack of Granular Control: Standard QoS applies policies to the physical port. It cannot distinguish between “Department A” and “Department B” if they share the same subnet or interface.

Result: Administrators cannot enforce Service Level Agreements (SLAs) such as “The R&D team gets a guaranteed 5Gbps, regardless of individual user actions.”

How HQoS Works on SONiC and VPP

To solve these problems, AsterNOS-VPP shifts the scheduling paradigm from “Port-Centric” to “Structure-Centric”.

How it solves the problem: AsterNOS-VPP constructs a four-level logical tree: Port -> Group -> User -> Queue.

Deconstructing the “H”: The 4-Level Logic Tree

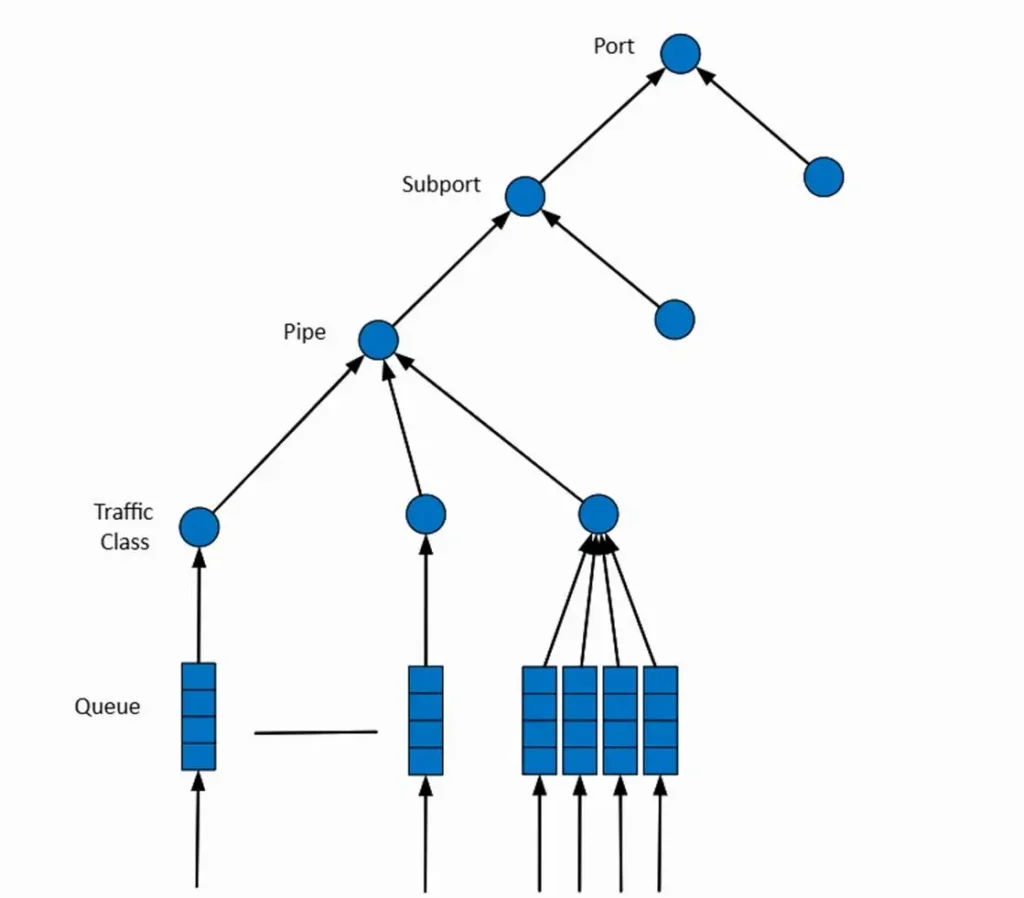

To truly understand how AsterNOS-VPP governs traffic, we must look inside the Hierarchy. AsterNOS-VPP implements a standardized 4-Level Scheduler that maps complex organizational structures directly to hardware resources.

Think of it as a corporate budget approval process: for an expense (packet) to be approved (transmitted), it must pass through every level of management hierarchy.

The Four Levels Defined:

- Level 1: Port

- Role: Represents the physical interface bandwidth (e.g., 10Gbps).

- Function: The ultimate constraint. No matter how much bandwidth you allocate below, the total cannot exceed the pipe’s physical limit.

- Level 2: Subport (Group)

- Role: Aggregates all users belonging to a specific entity (e.g., “R&D Dept” or “Guest Wi-Fi”).

- Function:The “Dam” Layer. This is where you apply the most critical Shaping (PIR) policies to isolate tenants. Even if the physical port is empty, the “Guest Group” cannot exceed its assigned 200Mbps cap.

- Level 3: Pipe (User)

- Role: Represents a specific IP address or Subnet (e.g., Employee A’s laptop).

- Function:The “Distribution” Layer. It controls the peak rate for a single endpoint to prevent one user from starving others within the same group.

- Level 4: Queue

- Role: Differentiates traffic types (Voice, Video, Data) for a single user.

- Function:The “Priority” Layer. This is where Strict Priority (SP) and DWRR scheduling algorithms operate to ensure critical packets jump the line.

According to the device configuration guide, the traffic control is strictly enforced using two key parameters: PIR (Peak Information Rate) and PBS (Peak Burst Size).

Although the hardware handles the complex math, the logic follows a rigorous “Credit Check” system across layers. Imagine a Voice packet (Queue 7) generated by User A in the R&D Group. To leave the interface, it must “pay” credits to every layer simultaneously (A “Logical AND” check):

- Step 1: The Queue Check (Level 4)

- Action: The Scheduler looks at Queue 7. Is it this queue’s turn? (Strict Priority logic).

- Result:Pass. (Voice has priority).

- Step 2: The User Check (Level 3)

- Action: The packet climbs to the User level. Does the User’s “Wallet” have enough tokens?.

- Result:Pass. (User A consumes tokens).

- Step 3: The Group Check (Level 2)

- Action: The packet proceeds to the Department level. Does the “Guest Group” have enough total tokens left?

- Result:Fail/Wait. (If the Group’s 200Mbps bucket is empty).

- Step 4: The Port Check (Level 1)

- Action: Finally, does the physical wire have space?

Two Underlying Architecture to Implement HQoS on SONiC and VPP

AsterNOS-VPP features a unified configuration model capable of driving two distinct underlying architectures. By seamlessly integrating both Software and Hardware Architectures, it delivers an optimal balance between cloud-scale scalability and deterministic, wire-speed performance.

Software Architecture (VPP) :

Built upon the high-performance VPP (Vector Packet Processing) engine.

- Mechanism: HQoS logic is executed in software using system RAM for state tracking.

- Benefit:High Scalability. Supports tens of thousands of logical users/queues, limited only by memory. Ideal for high-density cloud gateways.

Hardware Architecture (Offload):

Asterfusion ET2500 supports both generic CPU scheduling and hardware acceleration. Enabling the Offload feature is the key to unlocking the device’s true potential.

- Mechanism: When offload is enabled, the HQoS policy is moved from the general-purpose CPU to the Marvell Octeon chip’s dedicated NPU (Traffic Manager) unit.

- Benefit:Zero CPU Impact & Deterministic Latency.

- Zero CPU Load: Even at line-rate forwarding, the CPU usage remains at 0% because the NPU handles the scheduling logic.

- Deterministic Latency: The NPU uses a pipeline architecture with fixed processing times, eliminating the jitter caused by CPU interrupts and context switches found in software scheduling.

Performance Comparison

Different execution engines follow different scheduling philosophies. The following table contrasts the Software Scheduler with the Hardware Scheduler:

| Feature | Software | Hardware |

| Logic Detail | 1. Process SP queues until empty.2. Allocate remaining bandwidth to DWRR queues.3. Schedule DWRR based on weights. | 1. Process by Priority Level.2. Queues with the same priority are scheduled concurrently.3. Intra-group scheduling uses DWRR. |

| Execution Engine | CPU | NPU |

| CPU Load | High (Scales with traffic) | Zero (Offloaded) |

| Jitter | Variable | Deterministic (Nanosecond level) |

| Scalability | Limited by RAM | Limited by Hardware Nodes |

Practical Examples

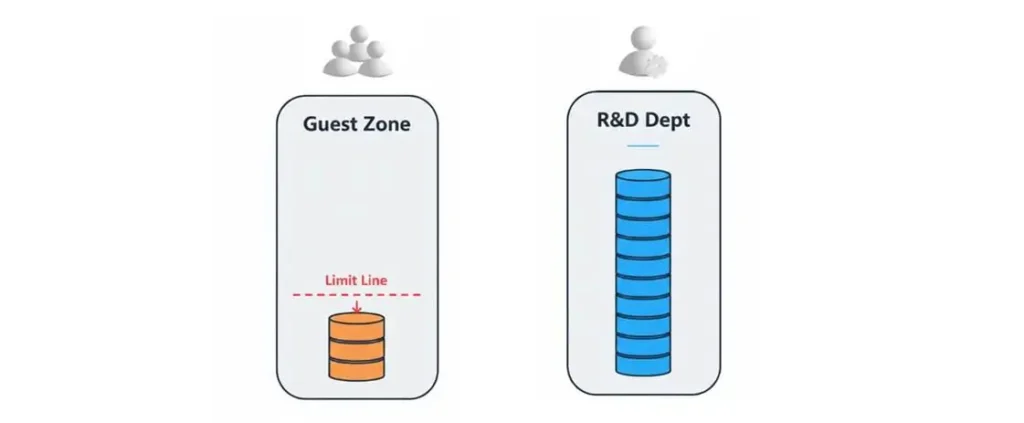

Use Case 1: Multi-Tenant Resource Isolation

Benefit: Prevent “Bandwidth Hogs” from affecting business operations.

Example: Restrict the “Guest Zone” to a hard limit of 200Mbps, while allocating 5Gbps to the “R&D Dept”.

Config Code:

Define Group Policies (Shaping at Level 2)

- hqos-user-group-profile guest-zone

- user-profile emp-standard shaping pir 25000000

- hqos-user-group-profile rd-dept

- user-profile emp-standard shaping pir 625000000

Use Case 2: Critical Service Assurance (Voice First)

Benefit: Guarantee zero packet loss and low latency for VoIP even during congestion.

Example: Map Voice (DSCP 46) to Queue 7 (Strict Priority) and Data to Queue 0 (DWRR).

Config Code:

Define Mapping & Queueing (Scheduling at Level 4)

qos map dscp_to_tc voice-prio 46 7

qos map dscp_to_tc voice-prio 0 0

hqos-user-profile emp-standard

qos-map bind dscp_to_tc voice-prio

tc-queue 7 mode strict # Voice: Jump the queue

tc-queue 0 mode dwrr 1 # Data: Wait for turnSummary

To Implement HQoS on SONiC and VPP(AsterNOS-VPP) allows network administrators to move from simple “Pipe Management” to sophisticated “Traffic Governance.”

By combining Logical Isolation at the tenant level with Hardware-Accelerated Scheduling at the packet level, AsterNOS-VPP delivers a solution that is both flexible enough for complex organizational structures and powerful enough for delay-sensitive applications.

Want to try it yourself? Please refer to the “AsterNOS-VPP HQoS Use Cases” for detailed configuration steps!

Have a project in mind?

Fill out the form, and we’ll reach out to you today !

Contact US !

- To request a proposal, send an E-Mail to bd@cloudswit.ch

- To receive timely and relevant information from Asterfusion, sign up at AsterNOS Community Portal

- To submit a case, visit Support Portal

- To find user manuals for a specific command or scenario, access AsterNOS Documentation

- To find a product or product family, visit Asterfusion-cloudswit.ch