Reduce OpenClaw Token Costs: Save Up to 100% via ET2508 Local Generation

written by Asterfuison

Table of Contents

Introduction

We can reduce OpenClaw token costs. This is not a marketing claim. We enable local token generation on ET2508, making token production practical and cost-efficient.

This is possible because the platform includes an M.2 key slot for installing an AI module. It supports multiple large language models and AI workloads, including LLMs such as GPT-OSS-20B-A3B, as well as computer vision tasks like detection and segmentation.

Next, let’s look at how the ET2508 helps reduce OpenClaw token costs.

Enabling OpenClaw for Network Operations via ET2508

OpenClaw has recently gained major traction in the developer community. Guides on local deployment and parameter tuning are everywhere. Many users are excited to run it on remote servers or dedicated compute nodes, expecting this AI strategist to handle everything.

But in real enterprise network operations, once the initial excitement fades, a practical engineering bottleneck quickly appears: remote compute has no native awareness of the local network.

No matter how capable an LLM is, it is still constrained by its context window. If you want it to troubleshoot network latency or analyze abnormal traffic, what is the input model? Send massive PCAP captures and tens of thousands of NetFlow logs directly to it?

That approach creates serious data sovereignty and privacy concerns. It also burns through expensive tokens at high speed, while increasing the chance of hallucinations caused by raw and noisy data.

Large models need high-quality context. Networks need an edge intelligence layer that understands how to work with large models.

The “Ideal Host” for OpenClaw — ET2508 Hardware Specification

To be clear, when we designed the ET2508 as an open intelligent gateway, we did not anticipate the rapid rise of OpenClaw. The original goal was straightforward and engineering-driven: build a carrier-grade edge gateway with true hardware–software decoupling and converged compute capabilities.

The ET2508 is based on an 8-core ARM64 N2 processor. It comes with 16 GB DDR5 memory by default, expandable up to 48 GB via plug-in modules. It also runs as an open system with native support for Linux distributions such as Ubuntu and Debian.

This level of openness in both hardware and software creates a disruptive foundation for current AI workloads. It enables direct deployment of a quantized OpenClaw model on a network edge gateway.

With sufficient memory headroom, models in the 7B to 14B parameter range can run locally with stable performance.

A Free Local “Token Production Line” for OpenClaw

On ET2508, simply deploying the model is only the first step. The real engineering challenge is how to keep it properly “fed.” Directly passing raw network byte streams into a large model quickly exhausts the context window and drives API costs out of control.

This requires the gateway to do more than host the model. It must also take responsibility for upstream data cleansing and feature extraction. At this stage, ET2508 shows the strength of a true open hardware foundation.

First, it provides native and rich high-quality data sources. The underlying AsterNOS-VPP system natively supports NetFlow and IPFIX-based traffic sampling. In addition, thanks to the open Ubuntu environment, tools such as ntopng and Snort can run directly on the gateway, enabling full packet visibility at the network edge.

Second, it offers a powerful hardware-accelerated pipeline. Through its M.2 interface, the ET2508 can integrate AI acceleration modules ranging from 26 TOPS up to 40 TOPS. This level of edge compute is sufficient to run lightweight feature extraction models locally.

As raw traffic flows through this pipeline, it is aggressively and efficiently denoised, filtered, and reduced in dimensionality. Unnecessary low-level heartbeat packets are removed. Thousands of repetitive malicious scans are compressed into a single structured JSON-style semantic token, for example:

{"event_type": "abnormal_scan", "source_ip": "192.168.1.50", "action": "blocked"}

This local transformation significantly helps reduce OpenClaw token costs by compressing raw telemetry into semantic tokens.

No sensitive data needs to be sent to the cloud, and no expensive API calls are required. In this architecture, ET2508 itself becomes a local token production line, continuously feeding refined network intelligence in real time to the OpenClaw model running on the same device.

Use OpenClaw to Turn the Gateway into Your Personal Network Assistant

Once this localized AI data pipeline is in place, the network operations experience shifts fundamentally.

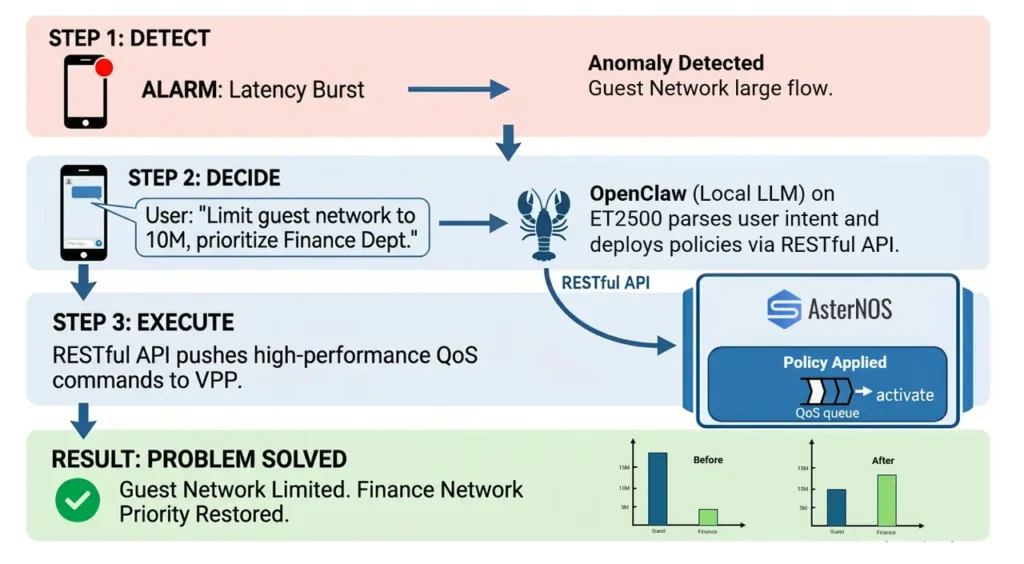

Imagine this scenario. You are outside having coffee when an alert arrives. The core business system is experiencing network latency.

In a traditional setup, you would need to open a laptop, connect to VPN, and log into multiple firewall and routing platforms. You then search through hundreds or thousands of ACL rules and QoS policies to identify the root cause.

With OpenClaw running on ET2508, the workflow becomes a simple cross-device conversation.

Because the gateway has already refined and structured traffic features through its preprocessing pipeline and synchronized them to the model, OpenClaw can proactively respond:

“An abnormal high-volume download has been detected in the guest network, consuming 80% of the egress bandwidth.”

At this point, you only need to reply from your phone: “Limit guest network bandwidth to 10 Mbps. Prioritize finance department traffic.”

OpenClaw understands the intent precisely and, through the standardized RESTful APIs on ET2508, directly pushes QoS queue scheduling and rate-limiting policies down to the AsterNOS-VPP system.

The issue is resolved, without even opening a laptop, and this workflow helps to reduce OpenClaw token costs by minimizing unnecessary context transfer.

Build a Low-Cost OpenClaw Pipeline with ET2508 at the Network Edge

In today’s AI-driven landscape, large models such as OpenClaw demonstrate strong reasoning capabilities. However, without addressing the input quality and privacy constraints of the context window, even the most capable model is reduced to operating in a remote compute silo, effectively “interpreting shadows from incomplete data.”

Enterprise network operations require a physical edge that understands both underlying routing systems and seamless interaction with large models.

The ET2508 open intelligent gateway, built on the AsterNOS-VPP stack, fills this gap with a fully decoupled hardware–software architecture and optional AI acceleration capabilities. It is not only a high-performance edge device with up to 60 Gbps forwarding capacity, but also a local token generation layer deployed at the network edge.

The idea is to let ET2508 generate high-quality tokens locally to feed OpenClaw. Raw network traffic is first distilled on-site into structured, AI-consumable semantic representations. These are then used as clean context inputs for the model.

In return, model decisions are converted back into precise network policies through standard RESTful APIs, directly applied to the underlying AsterNOS-VPP system.

Overall, the ET2508 edge architecture is designed to reduce OpenClaw token costs through local context generation and filtering. This enables a complete and practical engineering loop.

More importantly, it represents a new direction from Asterfusion for network engineers and modern enterprises. Networks are no longer operated as traditional infrastructure, but evolve into intelligent systems at the edge.

We also offer additional deployment options for OpenClaw across different platforms. Contact us and share your requirements, and we will help you identify the most suitable setup.

Request a demo or need assistance ?

Fill out the form, and we’ll reach out to you today !