Table of Contents

SRv6 on SONiC: Evolution and Roadmap from Metro Networks to Data Center

As data center cloud environments place increasing demands on network programmability, service isolation, and automated operations, SRv6 (Segment Routing over IPv6) is becoming a key technology for next-generation data center networks. We have implemented SRv6 features on SONiC and deployed them in production. Based on real requirements from data center cloud scenarios, we continue to extend these capabilities beyond initial use cases. This lays the groundwork for cloud-based transport, VPN services, and future controller integration.

In the early stage, we first completed the development and validation of core SRv6 features on SONiC in the CX-M series switches. As customer requirements evolved from traditional environments to data center cloud, we began to consolidate these capabilities into a structured framework. We also defined DC-oriented enhancements. Through a combination of baseline and advanced features, the solution addresses key SRv6 requirements in data center cloud deployments.

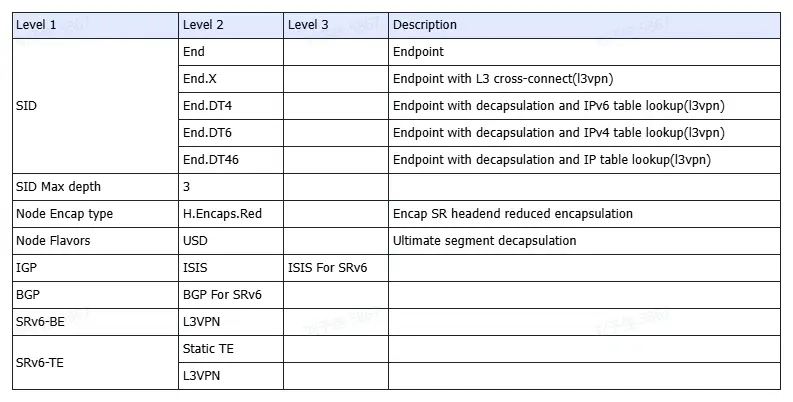

Available SRv6 Features on SONiC

Our initial phase of SRv6 development focused on establishing a robust foundation for Layer 3 services. We have implemented a comprehensive suite of Endpoint behaviors (SIDs), including standard End nodes and specialized End.X behaviors for L3 cross-connects. To facilitate multi-tenant cloud environments, we introduced support for End.DT4, End.DT6, and End.DT46, enabling efficient decapsulation and table lookups for both IPv4 and IPv6 L3VPNs.

On the headend side, our implementation supports H.Encaps.Red, which utilizes reduced encapsulation to minimize overhead—a critical factor for data center efficiency. We have also integrated USD (Ultimate Segment Decapsulation) flavors to streamline packet processing at the final segment. These features are fully integrated with ISIS and BGP for SRv6, allowing for both Best Effort (SRv6-BE) and Static Traffic Engineering (SRv6-TE) paths. This ensures that L3VPN services remain highly available and performant across the existing network fabric.

The list is shown as below:

Future SRv6 Roadmap for Data Centers

As we look toward the future of data center networking, our roadmap is focused on expanding into Layer 2 services and enhancing network resiliency. We are currently developing L2VPN capabilities, including End.DX2 for cross-connects and End.DT2M/U for broadcast and unicast flooding. A major milestone in our upcoming release is the integration of G-SID[1] (Generalized SID, defined in the 2022 draft, later standardized as Replace‑CSID by RFC 9800 ) with a compression factor of 12, paired with COC (Compressed Continuation) flavors. This significantly reduces the IPv6 header overhead, allowing for deeper label stacks without sacrificing payload space.

Reliability is the cornerstone of our roadmap. We are introducing TI-LFA (Topology-Independent Loop-Free Alternate) for both ISIS and OSPF, providing sub-50ms convergence and high availability for critical paths. Furthermore, our EVPN-SRv6 implementation will span across VPLS, VPWS, and CCC, supporting all major Route Types (Type 1 through Type 5). This ensures that whether you are managing MAC/IP advertisements or Ethernet Segment Routes, the control plane remains unified and scalable.

To empower network administrators, we are also building out a sophisticated management layer. This includes BGP-EPE for egress peer engineering, BGP-LS for reporting detailed topology and SID information to external controllers, and PCEP for centralized path computation. Combined with Telemetry and SBFD (Seamless BFD), these tools provide a 360-degree view of the network and automated path optimization.

The roadmap below outlines the planned SRv6 capabilities that will further extend this architecture for future data center networks: (Updated in April 2026)

Level 1 Feature | Level 2 Feature | Level 3 Feature | Level 4 Feature | Description |

SID | End.DX2 | - | - | Endpoint with decapsulation and L2 cross-connect(l2vpn) |

End.DT2M | - | - | Endpoint with decapsulation and l2 broadcast(l2vpn) | |

End.DT2U | - | - | Endpoint with decapsulation and l2 unicast fdb lookupt(l2vpn) | |

G-SID | 12 | - | - | - |

Node Encap type | H.Insert.Red | - | - | Insert SRH in ipv6 with reduced encapsulation |

H.Encaps.L2.Red | - | - | Encap SR headend over L2 layer with reduced encapsulation | |

Node Flavors | COC | - | - | G-SID mode, update DIP using compressed G-SID |

IGP | ISIS | FRR | Ti-LFA | High availability for path |

OSPF | OSPF For SRv6 | - | - | |

FRR | Ti-LFA | High availability for path | ||

SRv6-BE | EVPN | L2VPN | VPLS | type 1 ( Ethernet Auto-Discovery Route),supporting carrying VPN SID |

type 2 (Mac/IP Advertisement Route) | ||||

type 3 (Inclusive Multicast Ethernet Tag Route) | ||||

type 4 (Ethernet Segment Route) | ||||

VPWS | type 1 ( Ethernet Auto-Discovery Route), supporting carrying service Id and SID | |||

type 4 (Ethernet Segment Route) | ||||

CCC | - | |||

L3VPN | - | type 5 (IP Prefix Route) | ||

SRv6-TE | TE policy | - | - | - |

EVPN | L2VPN | VPLS | - | |

VPWS | - | |||

CCC | - | |||

L3VPN | type 1 (Ethertnet Auto-Discovery Route) | Support carrying SRv6 SID information | ||

type 2 (Mac/IP Advertisement Route) | Support carrying VPN SID information | |||

type 3 (Inclusive Multicast Ethernet Tag Route) | Support carrying VPN SID information | |||

type 4 (Ethernet Segment Route) | - | |||

type 5 (IP Prefix Route) | Support carrying VPN SID information | |||

Telemetry | - | - | - | Collect date from switch remotely |

BGP-EPE | - | - | - | Collect date from switch remotely |

BGP-LS | BGP-lS for SRv6 | SRv6 SID NLRI | SRv6 SID Information TLV | Report topology information collected by IGPs to controller |

SRv6 Endpoint Behavior TLV | - | |||

SRv6 BGP Peer Node SID TLV | - | |||

Node NLRI | SRv6 Capabilities TLV | - | ||

SRv6 Node MSD Types | - | |||

Link NLRI | SRv6 End.X SID TLV | - | ||

SRv6 LAN End.X SID TLV | - | |||

SRv6 Link MSD Types | - | |||

Prefix NLRI | SRv6 SID Stucture TLV | - | ||

SRv6 Locator TLV | - | |||

PCEP | PCEP for SRv6 | path-setup-type TLV | - | Works with the controller to centrally compute optimal path |

path-setup-type-capblities TLV | - | Simplified mechanism of BFD | ||

SRv6 RRO subobject | - | - | ||

SRv6 ERO subobject | - | - | ||

SBFD | - | - | - | - |

PIMv4/PIMv6 | - | - | - | - |

NG MVPN | - | - | - | - |

Use Cases: How These Capabilities Deliver Value in Data Center Cloud

From the practical network architecture of modern cloud data centers, the capabilities above ultimately map to three typical use cases: multi-tenant Layer 3 transport and inter-DC interconnection, Layer 2 service extension and cloud private line transport, and traffic engineering with automated operations.

Across these three scenarios, the value of SRv6 can be summarized in three key areas: enabling unified end-to-end orchestration, simplifying the protocol stack through a converged IPv6/SRv6 transport model, and supporting flexible expansion with long-term evolution through programmability.

Scenario 1: Multi-Tenant Layer 3 Transport and Inter-Data Center Interconnection

As cloud data centers continue to scale, multi-tenant services require unified transport across geographically distributed sites. The network must maintain strict tenant isolation while allowing flexible workload migration and policy changes.

Challenges:

- Multi-tenant networks rely on traditional VPN technologies, with limited cross-DC orchestration and inconsistent end-to-end policy control

- Complex protocol stacks with parallel MPLS and VXLAN deployments increase operational overhead

- Static forwarding paths limit traffic steering and cannot adapt well to dynamic workloads

Solution:

Use SRv6 BE forwarding to carry L3VPN services. A unified IPv6 data plane provides tenant-aware Layer 3 isolation and cloud-scale transport. With the SRv6 Service SID model, tenant traffic can be mapped to programmable forwarding behaviors, enabling end-to-end interconnection across data centers.

With support for EVPN Type 5 and related SRv6 route attributes, prefix advertisement and Service SID association are further enhanced. This simplifies the transport architecture while improving network scalability.

Scenario 2: Layer 2 Service Extension and Cloud Private Line Transport

In virtualization deployments, inter-site disaster recovery, and cloud private connectivity scenarios, services often depend on Layer 2 extension and stable site-to-site communication.

Challenges:

- Layer 2 extension often requires multiple overlay technologies, creating architectural complexity and scaling limits

- Layer 2 reachability and path control are separated, making it difficult to achieve both connectivity and deterministic forwarding

- Cross-data-center Layer 2 services lack unified orchestration

Solution:

With SRv6 Layer 2 service functions such as End.DX2, DT2M, and DT2U, combined with an EVPN control plane, the network can deliver point-to-point private lines and cross-site Layer 2 extension services.

On SONiC-based platforms, a unified IPv6 data plane reduces the complexity caused by parallel MPLS and VXLAN encapsulations. Combined with SRv6 TE Policy, Layer 2 traffic can follow explicitly engineered paths, enabling unified traffic steering across data centers.

Scenario 3: Traffic Engineering and Automated Operations

As data center networks grow in scale, operators need global traffic optimization and efficient automation to maintain service stability and improve resource utilization.

Challenges:

- Traditional traffic engineering depends on static configuration and lacks global optimization

- Fault detection and convergence mechanisms operate independently, without closed-loop coordination

- Manual operations do not scale well for large networks

Solution:

With SRv6 programmability, the network can evolve from static TE to a controller-driven TE Policy model. BGP-LS exports topology and link-state information, while PCEP installs path policies for end-to-end traffic orchestration and global optimization across regional data centers.

Ti-LFA provides fast reroute. SBFD enables rapid fault detection. Telemetry delivers real-time visibility and operational feedback. Together, they form an automated closed-loop framework covering detection, convergence, and optimization.

With a unified IPv6 data plane and programmable forwarding model, the network can continue to evolve and meet large-scale automation requirements.

Looking Ahead: More Requirements, More Possibilities

The value of SRv6 on SONiC lies in openness, scalability, and customization. The capability roadmap outlined above for Cloud Data Center Interconnect and IP Backbone reflects our phased development plan, shaped by real customer requirements and long-term technology evolution.

If you have more specific needs when deploying SRv6 in your data center cloud, such as deeper SID depth, adaptation to specific hardware platforms, integration with existing controller systems, or prioritization across EVPN, TE, or telemetry features, we welcome further discussion.

With the open ecosystem of SONiC, we can continue to advance the roadmap while also supporting feature customization based on project requirements. This helps accelerate SRv6 deployment and enables scalable adoption in production networks.

Note:

[1]: Around 2019, two competing SID compression schemes coexisted in the industry: G-SID (primarily proposed by China Mobile, Huawei, and ZTE) and uSID (led by Cisco). This continued until 2025, when the IETF SPRING Working Group—comprising major stakeholders like China Mobile, Huawei, Cisco, and Juniper—successfully integrated and merged these approaches into a unified C-SID (Compressed SID) solution. This standardized framework defines three distinct types: REPLACE-CSID, NEXT-CSID, and NEXT&REPLACE-CSID. Within the industry, REPLACE-CSID is still commonly referred to as G-SID, while NEXT-CSID is recognized as uSID.

Request a demo or need assistance ?

Fill out the form, and we’ll reach out to you today !