European Top-Tier Internet Giant: Building Hyperscale Data Centers Based on Asterfusion 400G OCP ORV3-Compliant Switches

written by Asterfuison

Customer Background :A Leading European Internet Platform Company

A top-tier internet platform company ranked among the top five in Europe operates across multiple core digital domains, including mapping services, e-commerce ecosystems, mobility platforms, and search engines. It manages a highly complex and massively scaled distributed infrastructure.

As one of the most demanding internet infrastructures in Europe, the company has long been under continuous pressure from the coexistence of large-scale real-time traffic and rapidly growing AI-driven workloads. This has placed increasingly stringent requirements on data center networks in terms of scalability, reliability, and extreme performance.

Over the course of its infrastructure evolution, the customer has deeply adopted SONiC and white-box switching architectures and has also engaged with other open networking vendors. However, as the environment continues to scale, the customer has increasingly recognized that enterprise-grade SONiC support capabilities and continuous software iteration have become critical factors influencing production network stability and architectural evolution.

The collaboration between the customer and Asterfusion began in 2024. Since then, hundreds of CX532P-N switches and 60 CX864E-N switches have been successfully deployed and validated in production environments, demonstrating strong maturity in performance, stability, and SONiC compatibility.

Building on this established foundation of trust and operational stability, the customer further expanded its collaboration in Q3 2025, planning to introduce 300 units of 400G OCP switches to support the next phase of its cloud and AI-driven network upgrade requirements.

The customer ultimately selected Asterfusion as a deep strategic partner due to its strong enterprise-grade SONiC technical support capabilities, rapid software iteration capability, and continuously optimized engineering delivery system, all of which ensure a stable and evolvable open networking foundation for large-scale production environments.

Key Challenges

1. Infrastructure Standardization

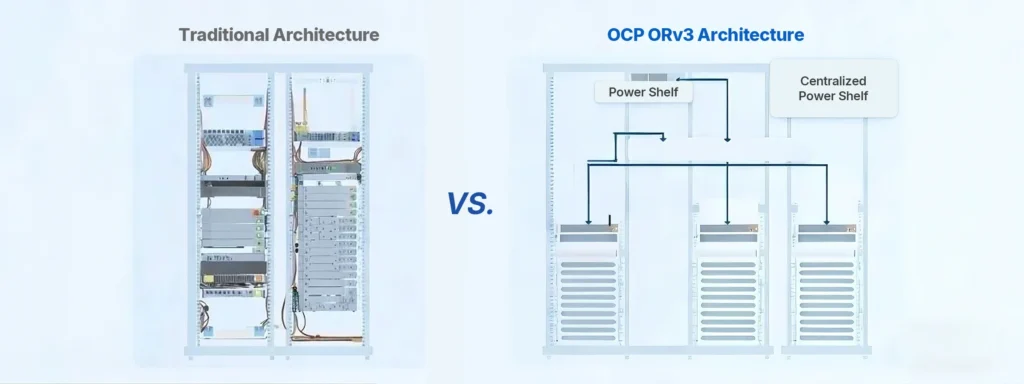

The customer planned to adopt 400G switches compliant with the OCP Open Rack V3 (ORv3) standard to align with its large-scale rack-level deployment architecture, enabling seamless integration and unified management across existing data center infrastructure.

2. High-Density Traffic Bottlenecks

With rapid business growth, traditional network architectures struggled to support the explosive increase in east-west traffic.

3. Hybrid Architecture Complexity

The customer required a stable interconnection between general-purpose compute (CPU) environments and existing GPU cluster networks while maintaining high efficiency.

4. Decentralization and Vendor Independence

A strategic goal was to eliminate vendor lock-in and establish a fully autonomous and controllable network evolution path.

Solution: 400G SONiC-Based OCP ORV3 Data Center Deployment

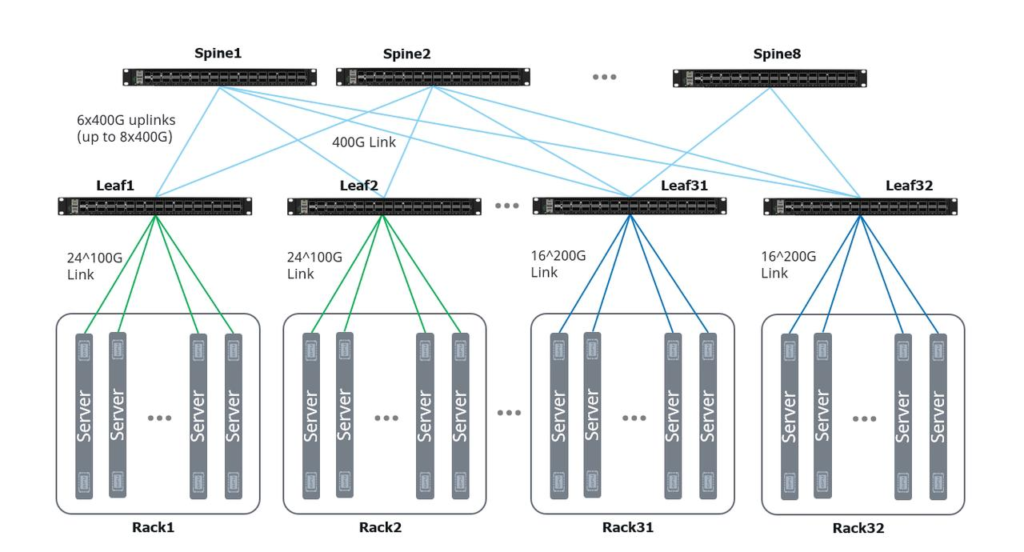

To address these requirements, we delivered a high-performance 400G OCP network architecture consisting of 240 leaf switches and 60 spine switches, fully powered by an enterprise SONiC operating system. The core value of this solution lies in its openness, flexibility, and scalability.

Reference: https://cloudswit.ch/product/ocp-orv3-data-center-switch-400g/

1. Physical Architecture and Compatibility

- Standards Compliance: Fully compliant with OCP ORv3 specifications, enabling direct integration into standard rack infrastructure.

- Ultra-High Density: 400G ToR (Top-of-Rack) access capability eliminates uplink bandwidth bottlenecks in large-scale clusters.

2. Deployment Architecture

The customer implemented a fine-grained deployment strategy across different layers:

🔹 ToR / Leaf Layer (240 units)

- Uplinks: Each leaf switch is equipped with 6×400G uplinks, scalable up to 8×400G, ensuring non-blocking connectivity to the spine layer.

- Downlinks: Supports high-density server connectivity with 24×100G or 16×200G configurations for CPU-based compute nodes.

- Innovative Testing (NIC Sharing): The customer is evaluating 400G breakout mode, where a single 400G port is split into two connections to different servers. This maximizes port utilization while preparing for future NIC evolution toward 400G.

🔹 Spine Layer (60 units)

Role: Core backbone layer connecting all leaf switches and providing high-speed fabric forwarding.

Fabric Architecture:

- Full-mesh connectivity with all 240 leaf switches, forming a standard Clos topology.

- ECMP (Equal-Cost Multi-Path) routing ensures non-blocking, scalable east-west traffic forwarding.

Performance Characteristics:

- Full 400G high-speed interfaces

- Designed for large-scale distributed computing and cloud-native traffic demands

- Ensures ultra-low latency and high throughput across the fabric

3. Heterogeneous Network Coexistence

- General Compute Domain (Asterfusion 400G): Handles large-scale distributed workloads such as e-commerce transactions, search indexing, and map rendering, emphasizing cost efficiency and openness.

- GPU Compute Domain (Third-party EOR solution): Uses End-of-Row architecture with 200G NICs connected to third-party 24×200G + 8×400G switches for AI training workloads.

- Interconnect Value: Asterfusion 400G switches interoperate seamlessly with existing GPU networks via standard protocols, demonstrating the maturity of the SONiC ecosystem in heterogeneous environments.

🔸 Interconnect Capabilities

- Based on standard protocols (BGP / EVPN / ECMP)

- Enables seamless communication between GPU and general compute domains

- Validates SONiC openness and ecosystem maturity

4. Deployment Outcomes

- Operational Autonomy

Unified SONiC-based management enables fully automated network operations. - Cost Optimization

Significant reduction in TCO and power consumption compared to traditional proprietary vendor solutions. - Performance Improvement

Substantially reduced east-west latency, meeting the demands of AI inference and large-scale distributed computing.

5. Architectural Value Summary

- 240 Leaf + 60 Spine Clos fabric enabling extreme scalability

- 400G ToR architecture ensures future-proof evolution

- NIC sharing improves bandwidth utilization efficiency

- Clear separation between general compute and GPU compute domains

- SONiC-based open networking eliminates vendor lock-in

- Architected for sustained AI-driven traffic growth

6. Conclusion

By deploying a SONiC-based open networking architecture with a 240-leaf and 60-spine 400G OCP fabric design, the customer successfully built a high-performance, scalable, and fully autonomous data center network infrastructure.

This architecture not only meets current cloud and internet-scale business requirements but also provides a robust foundation for future AI-driven training and inference workloads at scale.