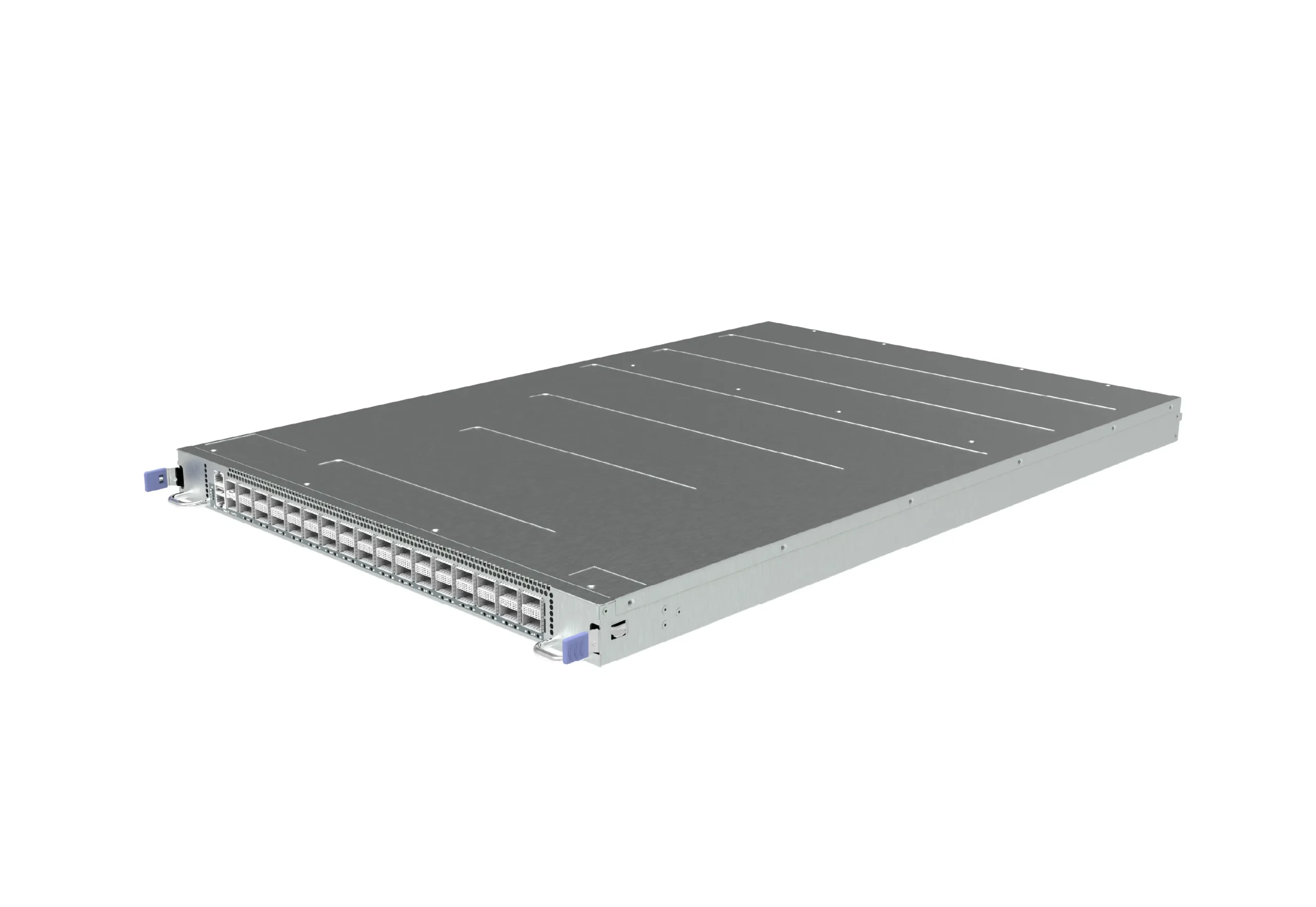

CX732Q-N-ORv3

OCP Rack ORv3 Data Center Switch for AI/ML/HPC, Enterprise SONiC Distribution

-

Standardized Open Compute Project form factor (ORv3)

-

32 Port 400G QSFP-DD, 2 Port 10G SFP+

-

Powered by Asterfusion’s Enterprise SONiC Distribution

-

Support: RoCEv2, EVPN Multihoming, BGP-EVPN, INT, and more

-

Purpose-built for AI, ML, HPC, and Data Center applications

OCP ORv3 Ready Data Center Switch with SONiC OS

Built to the rigorous standards of OCP, our switch integrates seamlessly into ORv3 racks. It leverages the 48V DC power bus bar architecture, reducing power conversion stages and increasing overall energy efficiency by up to 15% compared to traditional 12V systems.

Engineered for high-radix networks, the ORv3 switch supports ultra-high-density QSFP-DD ports. Whether you are building a non-blocking leaf-spine fabric or an AI backend network, our platform delivers wire-speed throughput with nanosecond-level latency.

Every unit comes pre-loaded with Enterprise SONiC OS, allowing you to get a turnkey solution. It supports features such as VXLAN, BGP-EVPN, EVPN multihoming, MC-LAG, ECMP, and DCI, and offers rich telemetry and automation via INT, REST API, gNMI, NETCONF, ZTP, Ansible, and Python.

With deep packet buffering and intelligent congestion management, the OCP network switch is optimized for the “All-to-All” traffic patterns typical of AI training and high-performance computing (HPC), ensuring zero packet loss for critical synchronization traffic.

32×400G QSFP-DD Low Latency Data Center Switch Enterprise SONiC Distribution Preload

The Asterfusion CX732Q-N-O is a high-performance 32-port 400G data center switch designed for the super spine layer in next-generation CLOS network architectures. Built on the Marvell Teralynx platform, it delivers 12.8 Tbps of line-rate L2/L3 throughput with ultra-low latency as low as 400ns, meeting the stringent performance demands of AI/ML clusters, hyperscale data centers, HPC, and cloud infrastructure.

KEY POINTS

ORv3

Open Rack Standard

500ns

32x400G

Port Density

ORv3/48VDC

Power Supply

Key Facts

500ns

↓20.4%

Inference Latency

↑27.5%

Token Generation Rate

Optimized Software

for Advanced Workloads

– AsterNOS

Optimized Software for Advanced Workloads – AsterNOS

Pre-installed with Asterfusion Enterprise SONiC NOS, the CX732Q-N offers a robust feature set tailored for AI and HPC workloads, like Flowlet Switching, Packet Spray, WCMP, Auto Load Balancing, and INT-based Routing.

Cloud Virtualization

DCI

VXLAN

BGP EVPN

RoCEv2

Easy RoCE

PFC/ECN/DCBX

High Availability

ECMP

MC-LAG

EVPN Multihoming

Ops Automation

In-band Telemetry

REST API/NETCONF/gNMI

Cloud Virtualization

RoCEv2

High Availability

Ops Automation

Specifications

|

Quality Certifications

Features

OCP ORv3 Rack Standard

The OCP ORv3 rack standard improves power supply, heat dissipation, and cabling efficiency. The core advantages of the OCP ORv3 400G data center switch are: standardized open rack ecosystem (OCP ORv3, with optional ORv2 compatibility), 400G high bandwidth, and sustainability.

Traditional Architecture VS OCP ORv3 Architecture

The ORv3 standard improves power supply capabilities and upgrades connector specifications for high-power devices. For example, it increases the maximum current limit per contact, which facilitates unified power supply racks and their integration with 400G switches, batteries, servers, and other devices.

Marvell Teralynx 7 Inside: Unleash Industry-Leading Performance & Ultra-Low Latency

12.8 Tbps

Switching Capacity

500ns

End-to-end Latency

70 MB

Packet Buffer

12.8 Tbps

Switching Capacity

500ns

Ultra Low Latency

70 MB

Buffer

Size

Full RoCEv2 Support for Ultra-Low Latency and Lossless Networking

Asterfusion Network Monitoring & Visualization

O&M in Minutes, Not Days

Panel Illustration

Resources

FAQs

-

It is a data center switch that complies with the OCP Open Rack v3 (ORv3) standard and provides 400G Ethernet ports (for example, 32×400G or 64×400G), typically used for spine/leaf or AI/ML cluster interconnect.

-

Most products use 12.8T/25.6T ASICs (such as 32×400G or 64×400G), matching ORv3’s 48V power and high‑power cooling design.

-

ORv3 introduces a 48V bus and around 100A IT gear connector capability, increasing the per‑contact current rating compared with ORv2 and making it more suitable for high‑power 400G switches.

-

Standardized power shelves, busbars, and IT gear connectors allow servers and switches to share unified power within the rack, simplifying energy monitoring and device replacement.

-

Common configurations include 32×400G QSFP‑DD (around 12.8T) or 64×400G (25.6T), sometimes with additional 10G/25G management or telemetry ports.

-

Chassis are usually 1U/2U with front‑to‑back or back‑to‑front airflow options, and hot‑swappable redundant PSUs supporting ORv3 AC/DC rack power architecture.

-

They act as TOR/leaf switches for GPU servers, providing 400G uplinks (for example 4×100G or 1×400G) to spine or core switches to build RoCEv2 fabrics for AI training clusters.

-

Combined with low‑latency ASICs and RoCEv2 optimizations (ECN, PFC, queue tuning), they form a lossless or near‑lossless network with high‑performance compute and storage nodes.

-

Common pluggable formats include QSFP‑DD, using 400G DR4, FR4, SR8 optics or DAC/AOC cables, selected according to topology and link distance.

-

Because each 400G port carries very high traffic, optical quality, link budget, and testing requirements are stricter than for 100G; standardized selection and pre‑production validation are highly recommended.

-

400G remains mainstream now, but leading silicon and system vendors have published clear 800G roadmaps, so it is wise to choose suppliers with a transparent 400G→800G evolution path.

-

By planning cabling carefully (for example, using fiber and patching that can be reused for 400G/800G), you can keep most cabling assets and only replace switches and optics during future upgrades.