Boost NetApp MetroCluster IP Storage with Asterfusion Data Center Switch

written by Asterfuison

Table of Contents

What is NetAPP MetroCluster?

NetApp MetroCluster ensures uninterrupted access and safeguarding of data for enterprise-level customers through a combination of array-based clustering and synchronous replication. This solution offers continuous availability of storage for NetApp ONTAP on FAS and AFF systems.

MetroCluster utilizes two primary storage fabrics: FC and Ethernet. The Ethernet storage fabric, called MetroCluster IP, eliminates the requirement for SAS bridges and replicates NVRAM using the same Ethernet ports as the storage network. This eliminates the need for dedicated FC switches and simplifies the setup process.

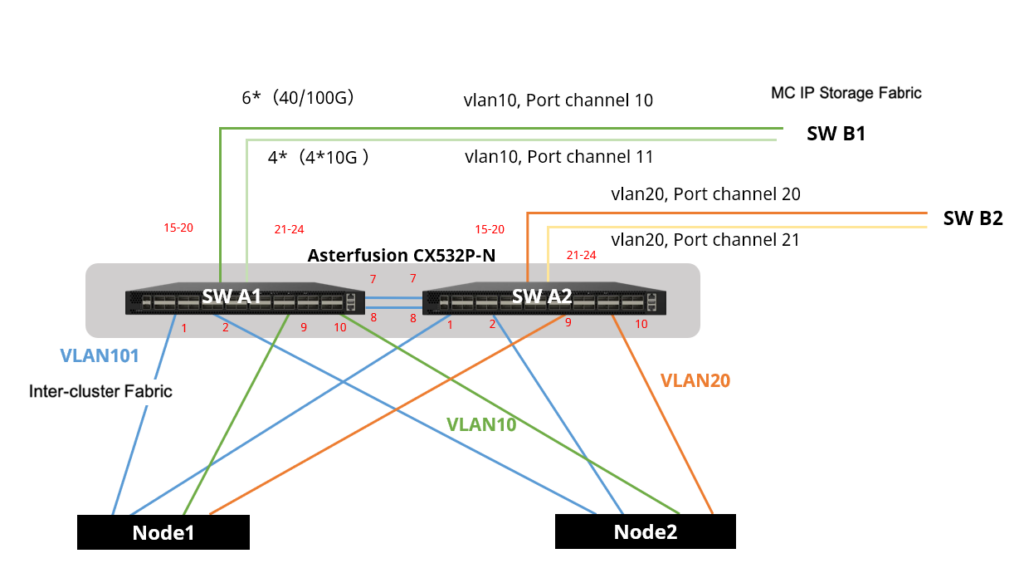

MetroCluster IP Architecture

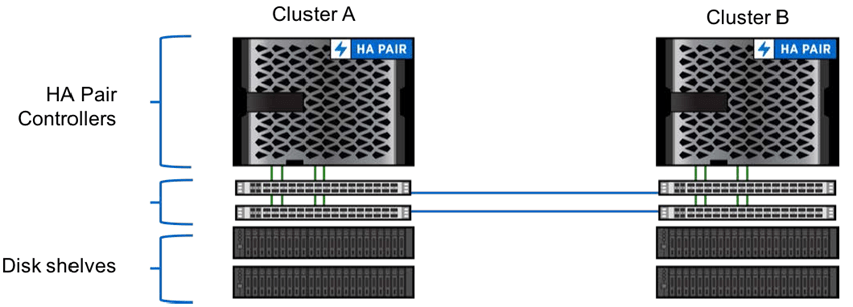

- One HA pair controller per site

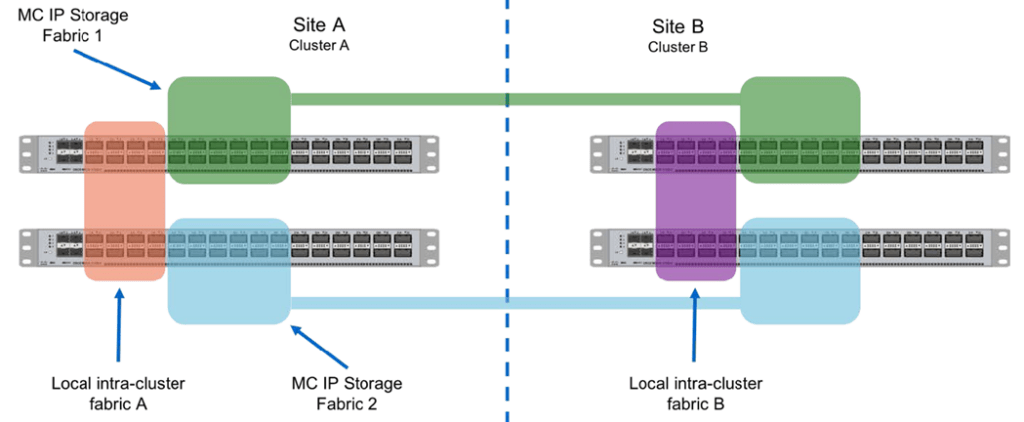

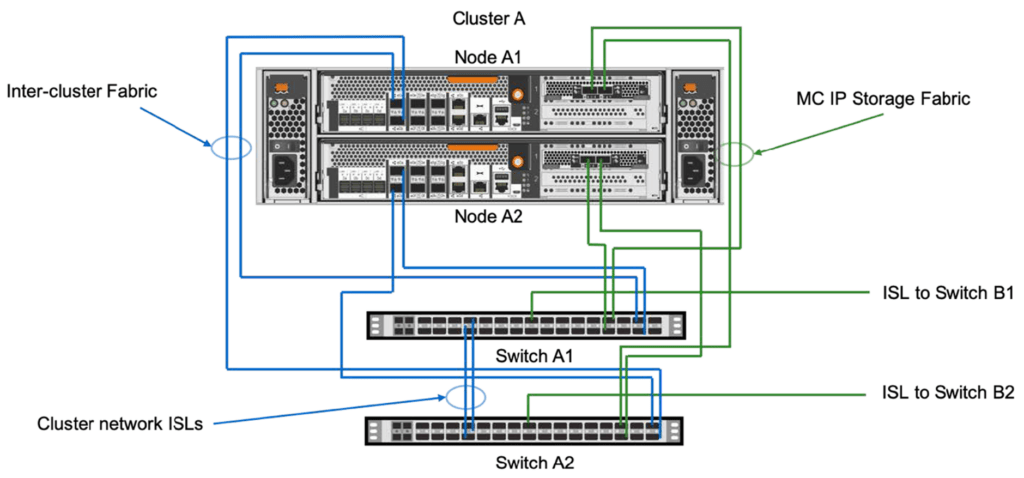

- Two high-speed Ethernet switches per site: Collapsed intracluster and intercluster switches for local and remote replication

- Disk shelves or internal storage

Focus on the back-end storage network, there are two independent fabrics for MetroCluster: IP storage network (Fabric 1&2,remote ) and the local cluster interconnect (Fabric A&B). In Multimode cluster, you’ll need switches to communicate nodes over cluster interconnect.

Ethernet switches compatible with Metrocluster IP

NetAPP MetroCluster IP switches that are listed here: NetApp HardWare Universe (NetApp-validated).

To access this URL, you’ll need to register with your email. But fret not, I’ve done the legwork for you and explored various vendors: from the Cisco Nexus 3132Q-V series, the Cisco Nexus 3232C series, and the Cisco Nexus N9K-C9336C-FX2 series, to Broadcom’s BES-53248 series and NVIDIA’s SN2100 series.

| Platform | Network Speed (Gbps) | Network Speed (Gbps) |

| AFF A900 | 100 | trunk mode |

| AFF A800 | 40 or 100 | access mode |

| AFF A700 | 40 | access mode |

| AFF A400 | 40 or 100 | trunk mode |

| AFF A320 | 100 | access mode |

| AFF A300 | 25 | access mode |

| FAS9000 | 40 | access mode |

| FAS9500 | 100 | trunk mode |

| FAS8700 | 100 | trunk mode |

| FAS8300 | 40 or 100 | trunk mode |

| FAS8200 | 25 | access mode |

Speeds and port modes for MetroCluster compliant switches

One could notice that these are all long-standing, reputable manufacturers. Their main selling points? Stability and dependability. These products have gone through rigorous testing and have been widely deployed in numerous systems. However, this quality assurance comes with a hefty price tag, making them a pricier option.

This brings us to an intriguing question. Are there vendors in the open network vendor who can strike that perfect balance between power-packed products and cost-effectiveness?

Considering Whitebox Switches as Cisco/NVIDIA Alternatives for MetroCluster IP

As we know, MetroCluster IP configurations can use MetroCluster-compliant switches since ONTAP 9.7 released, that means you can buy ethernet switches from other vendors for your back-end storage network.

This is good news! But there are still some switching and cabling requirements:

- QoS/traffic classification.

- Explicit congestion notification (ECN).

- L4 port-vlan load-balancing policies to preserve order along the path.

- L2 Flow Control (L2FC).

(The cables connecting the nodes to the switches must be purchased from NetApp)

Besides, NetAPP does not provide service for non-validated switches. So if you order MetroCluster IP without switches and decide to integrate MetroCluster with 3rd-party networking hardware, it will be necessary for you to seek out a reliable and established solution.

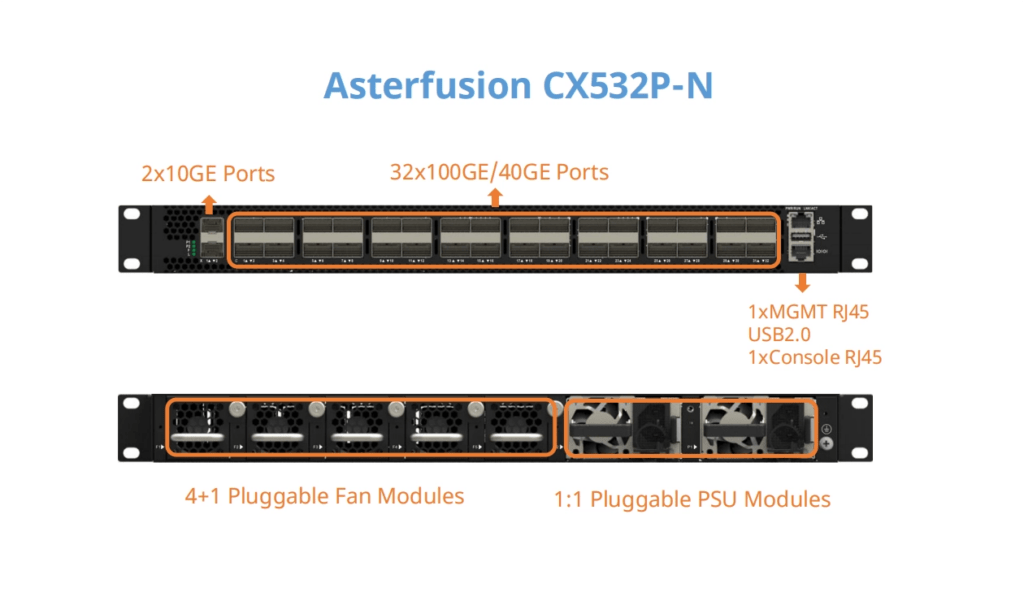

Asterfusion CX-N Data Center Switches – NetApp Compatible switch for Storage Fabric

Considering Asterfusion CX532-N(32x100GbE) data center switch as a viable option. Our customers have confirmed its stability and compatibility with NetAPP MetroCluster IP after conducting thorough testing in data centers.

- DSCP mapping Supported

- ECN Supported

- L4 Port-Vlan load balancing policy: support quintuple policy, VLAN policy is not required, there is only one VLAN in each port channel

- L2 flow control(L2FC): PFC Supported

Asterfusion NetApp Compatible Switch Support RoCEv2 for Storage Fabric

The Asterfusion CX-N data center switches are designed to cater to the demands of next-generation cloud data centers. These switches offer flexible deployment options in various scenarios with port speeds ranging from 10/25/40/100/200/400GbE. With a forwarding delay of at least 400ns per port, they ensure efficient data transmission. Additionally, the CX-N switches support cutting-edge technologies such as RDMA over Converged Ethernet (RoCEV2), PFC, ECN, and DCBX, enabling the establishment of highly efficient and cost-effective storage networks.